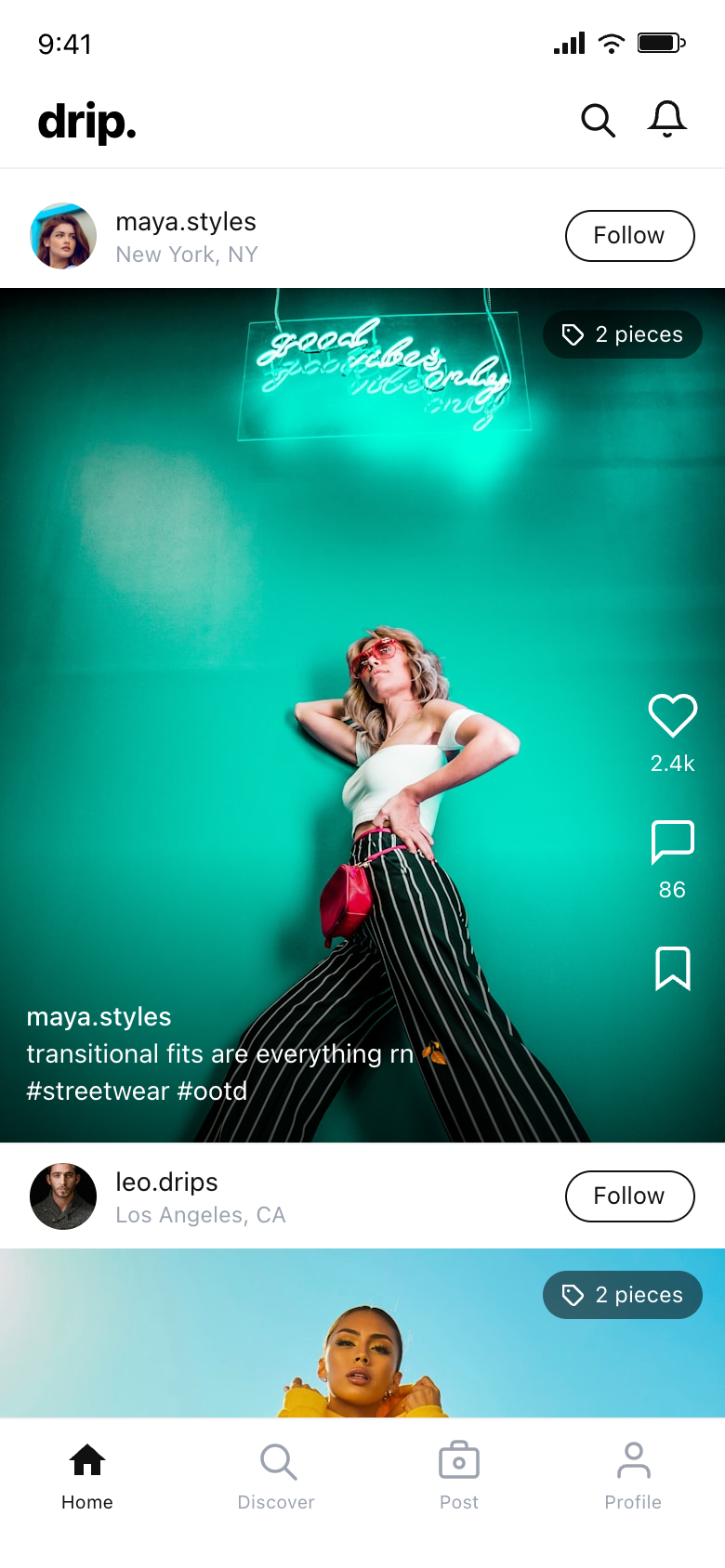

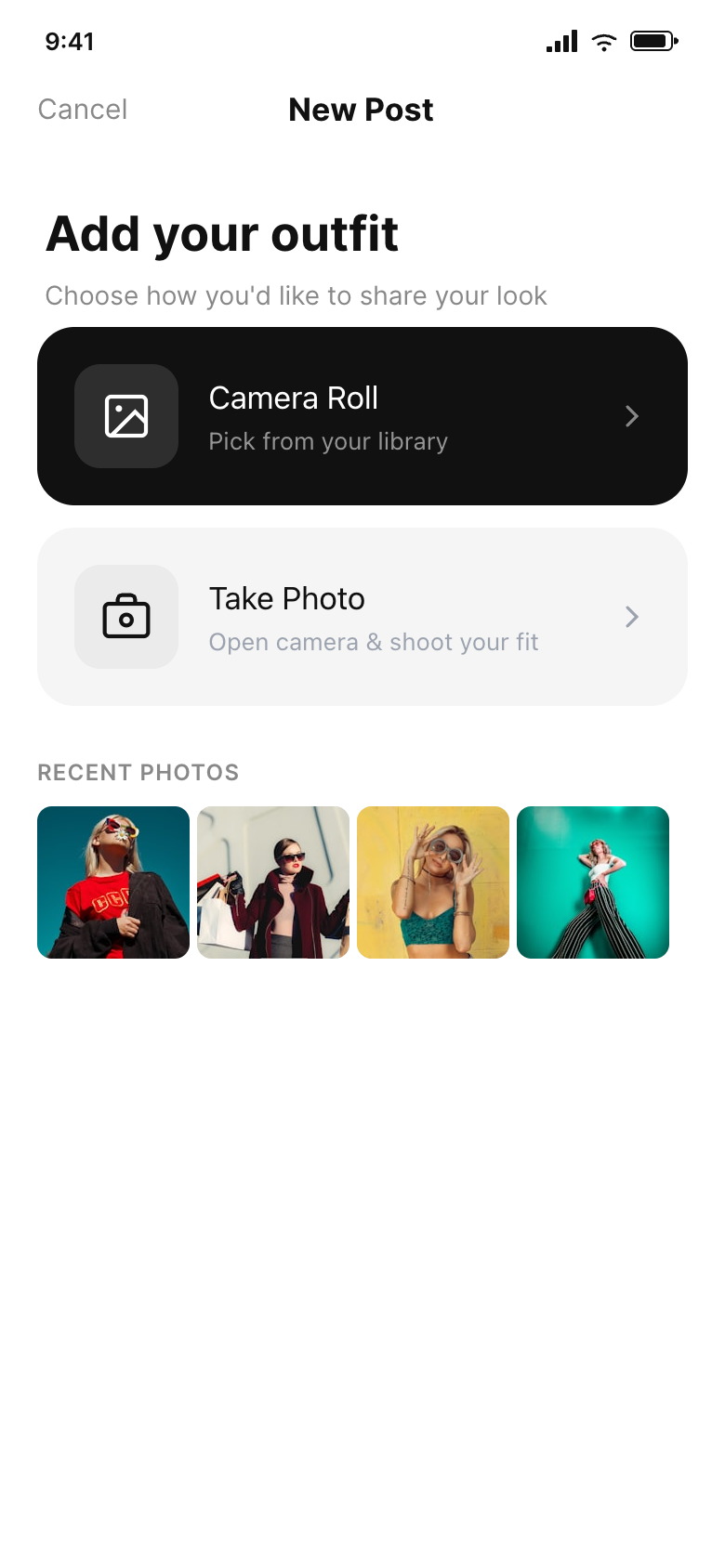

Gen Z fashion enthusiasts are already creating outfit breakdowns across TikTok and Instagram — but no platform is built for it. drip. is a concept mobile app I ideated and prototyped in collaboration with Claude, using it as a design thinking partner to pressure-test product decisions before touching a single frame. Once the concept had legs, I moved into Paper to prototype the full upload-to-share flow — six screens built and iterated entirely in the browser. The combination let me move from idea to interactive mock in a fraction of the time a traditional tools workflow would allow.

Intentional Light / Dark Split

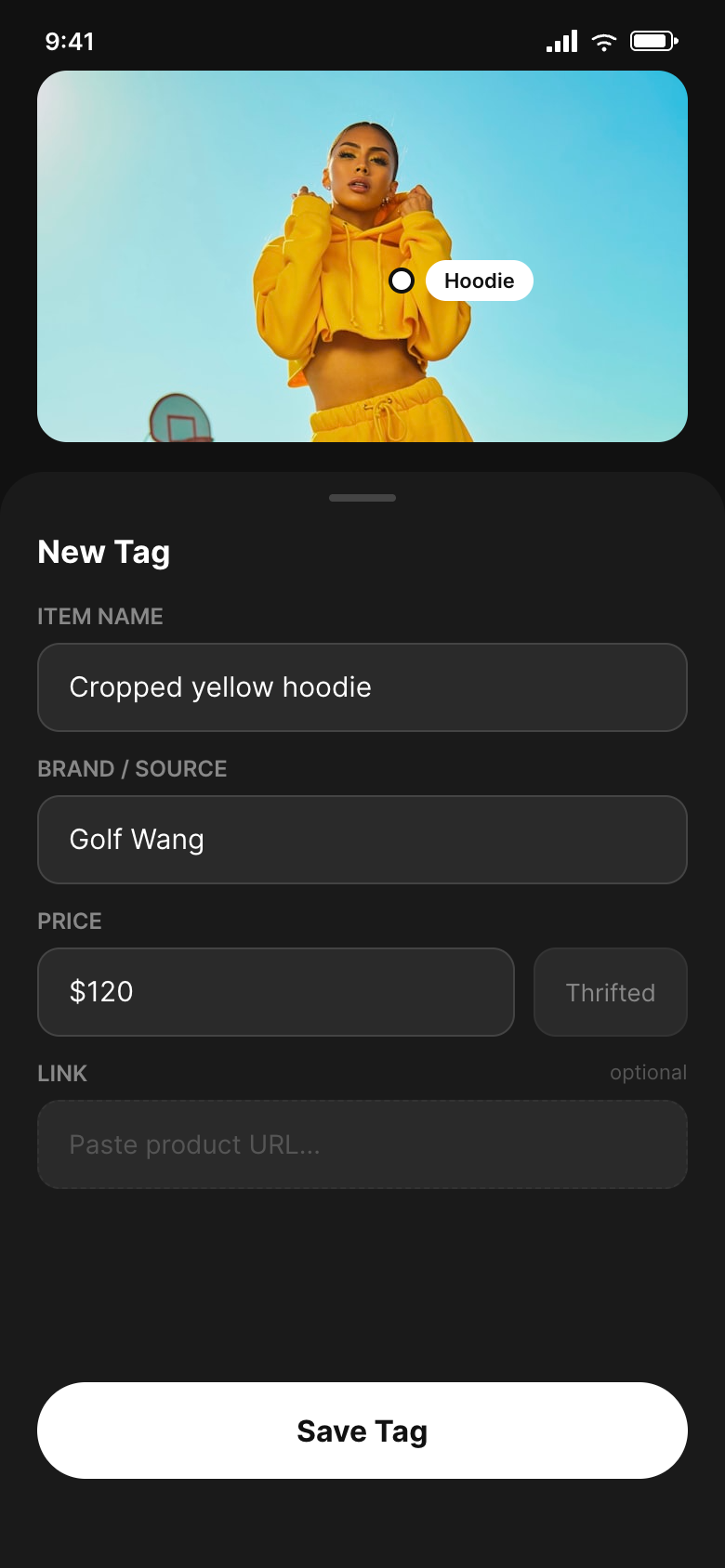

Browsing and social screens (home feed, photo source picker, review) stay white. The camera and editing context (photo preview, tag placement, tag detail form) flips to dark. This decision came out of a conversation with Claude about mental mode shifts in creative apps — the dark environment signals focus and reduces visual noise while working on a photo. I tested the split immediately in Paper and it held up across all six frames without any adjustments.

Manual Tagging Over ML

I worked through the ML vs. manual tagging decision with Claude at length — mapping accuracy tradeoffs, cold-start data problems, and what pure CV-based fashion apps had gotten wrong. The conclusion: manual tagging means the data is always right and captures niche and indie brands that no model would recognize. ML can be layered in later as a suggestion layer. Once the decision was made, Paper let me prototype the tap-to-tag interaction quickly enough to feel out the gesture before committing to it.

Social Interaction Patterns Borrowed Deliberately

The vertical action rail and caption-on-photo layout are borrowed from TikTok intentionally — not because it's trendy, but because these are gestures Gen Z users have already internalized for vertical media. Claude helped articulate why introducing a novel interaction model here would create unnecessary learning overhead for a behavior that should feel immediately natural. The goal was to make the platform feel like the place this behavior was always supposed to live.

This project is ongoing. The upload and tagging workflow is fully mapped across six Paper frames, but the Discover tab, Profile screen, and social graph mechanics remain to be designed. The next design challenge is the cold-start discovery problem — how to surface content to users who aren't yet following anyone. More broadly, this project confirmed that Claude and Paper together function as a genuine rapid-concept workflow: Claude for thinking, Paper for building, the two moving fast enough to keep up with the ideas.